AI accelerators explained simply. Learn what AI accelerators are, how GPUs, TPUs, and NPUs work, and why they power today’s fastest artificial intelligence systems

Introduction: Why AI Accelerators Matter

Artificial intelligence feels almost magical—chatbots answer questions instantly, phones recognize faces, and cars learn to drive. But behind all this magic is specialized hardware working at incredible speed. That hardware is called AI accelerators.

In simple terms, AI accelerators are chips designed specifically to run AI tasks faster and more efficiently than regular computer processors. This article explains AI accelerators explained simply, without technical overload, so anyone can understand how they work and why they matter.

What Are AI Accelerators? (Simple Definition)

An AI accelerator is a type of computer chip built to speed up artificial intelligence tasks such as:

- Image recognition

- Speech processing

- Language translation

- Machine learning training and inference

Unlike traditional CPUs, AI accelerators are optimized to perform many calculations at the same time, which is exactly what AI models need.

👉 Think of it this way:

- A CPU is like a smart multitasking office worker

- An AI accelerator is like a factory assembly line built for one job—AI math

Why Regular CPUs Are Not Enough for AI

CPUs are excellent at handling everyday tasks like browsing, emails, and spreadsheets. However, AI models rely heavily on:

- Matrix multiplication

- Parallel processing

- Massive data movement

CPUs process tasks mostly one after another, while AI needs thousands of calculations simultaneously. That’s where AI accelerators shine.

Main Types of AI Accelerators (Explained Simply)

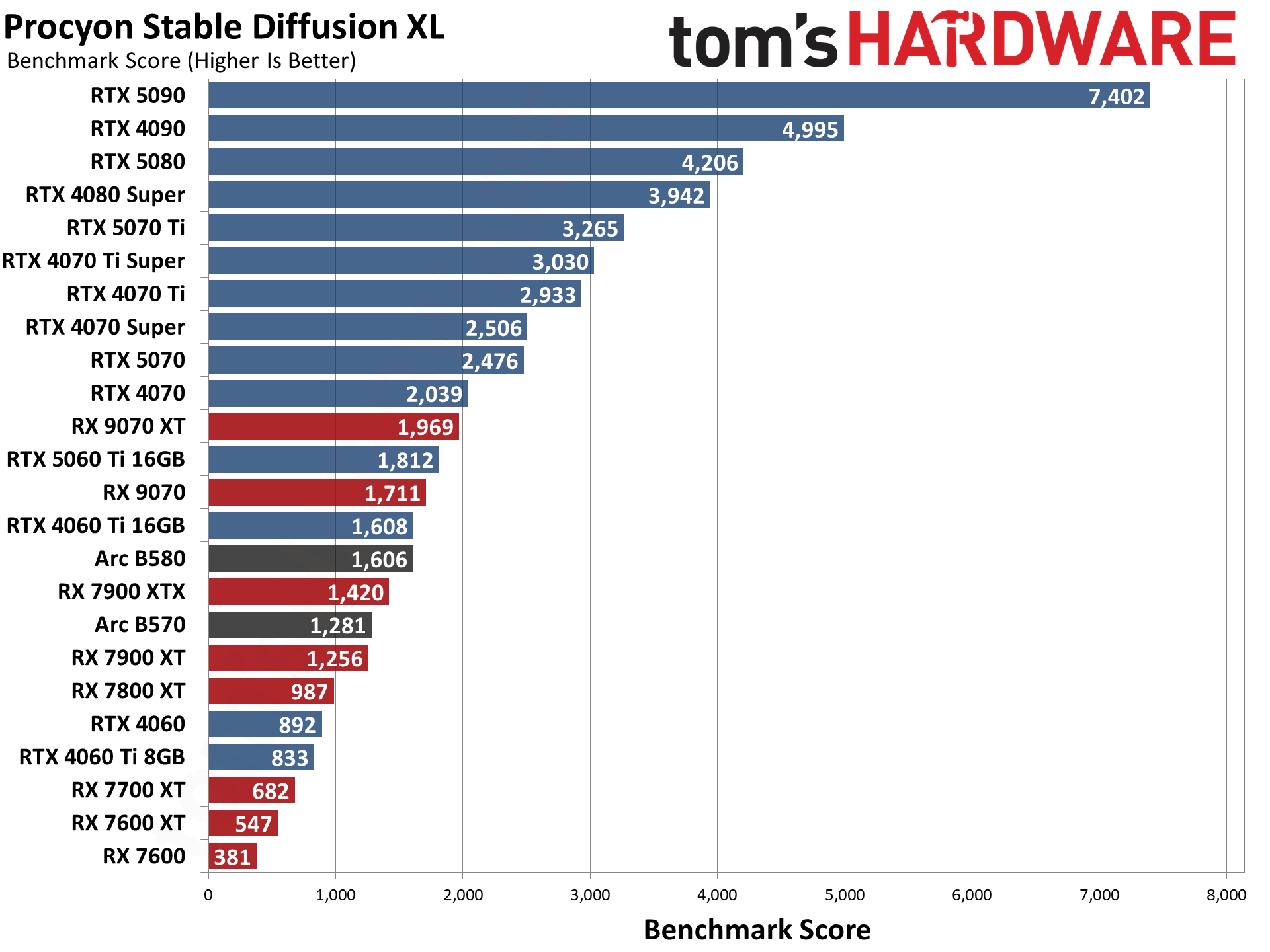

1. GPUs (Graphics Processing Units)

Originally made for: Video games

Now used for: AI, machine learning, data science

GPUs contain thousands of small cores that can perform calculations in parallel. This makes them perfect for training large AI models.

Simple explanation:

A GPU is like having 1,000 calculators working at the same time.

Common GPU brands:

- NVIDIA (RTX, A100, H100)

- AMD (Instinct series)

2. TPUs (Tensor Processing Units)

Created by: Google

Designed for: Machine learning only

TPUs are custom-built AI accelerators optimized for neural networks. They are extremely fast and energy-efficient but mostly available through Google Cloud.

Simple explanation:

A TPU is a special-purpose AI engine, not a general computer chip.

3. NPUs (Neural Processing Units)

Found in: Smartphones, laptops, edge devices

NPUs are smaller AI accelerators designed for on-device AI, such as:

- Face unlock

- Voice assistants

- Photo enhancement

Simple explanation:

An NPU lets your device run AI without sending data to the cloud, improving speed and privacy.

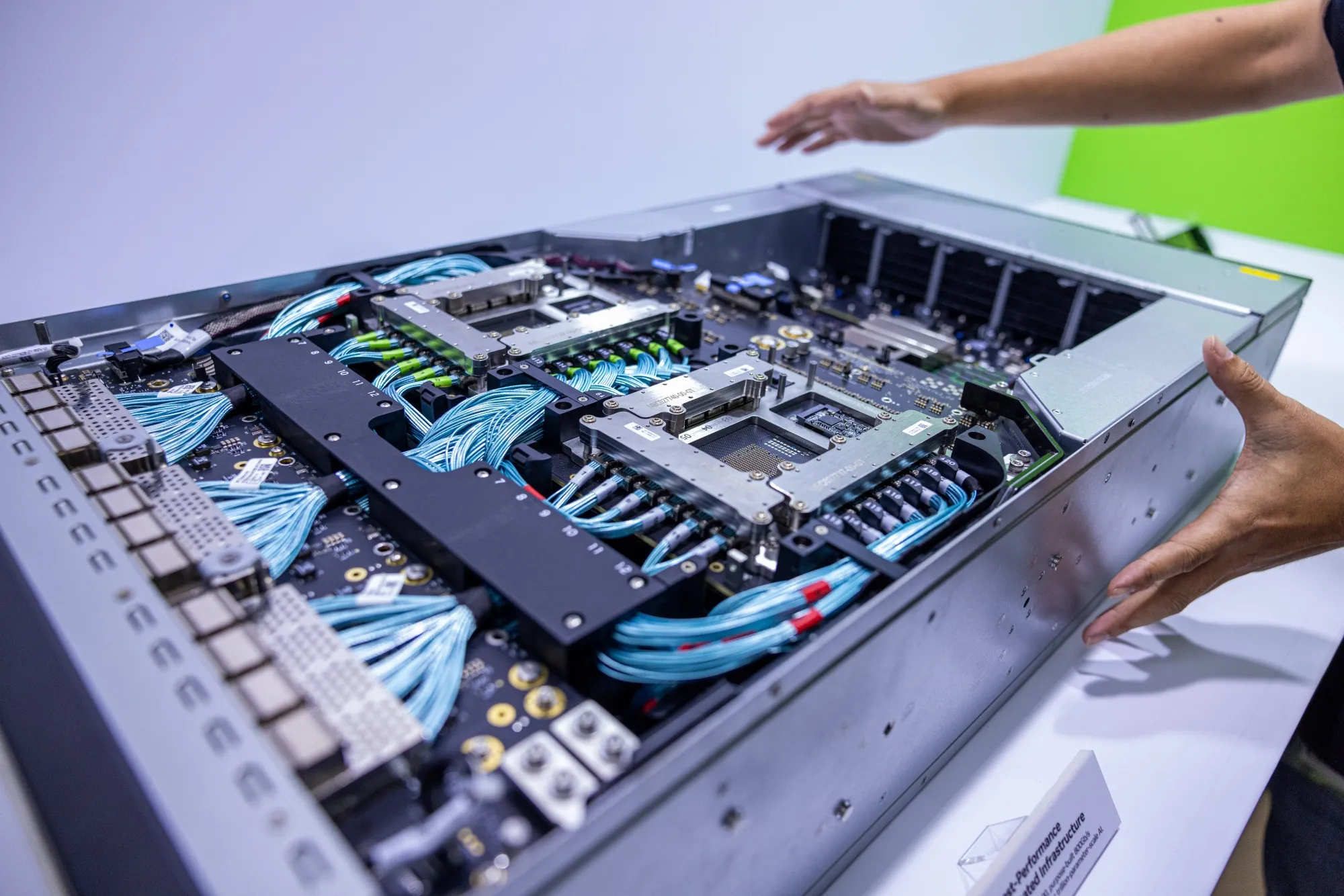

4. ASICs (Application-Specific Integrated Circuits)

ASICs are chips built for one exact task. In AI, they are designed for maximum efficiency at scale, especially in data centers.

Example: AI inference chips used by large tech companies.

How AI Accelerators Actually Work (Step by Step)

Let’s break it down simply:

1️⃣ Data (images, text, audio) enters the AI model

2️⃣ The accelerator performs millions of math operations in parallel

3️⃣ Results are processed instantly

4️⃣ Output is delivered (answer, prediction, image, etc.)

Because AI accelerators handle many operations at once, tasks that once took minutes now take milliseconds.

AI Training vs AI Inference (Easy Explanation)

AI Training

- Teaching an AI model using large datasets

- Requires massive computing power

- Mostly done on GPUs and TPUs

AI Inference

- Using a trained model to make predictions

- Happens on phones, laptops, servers

- Uses GPUs, NPUs, or ASICs

👉 Training is like teaching a student, inference is like asking them a question.

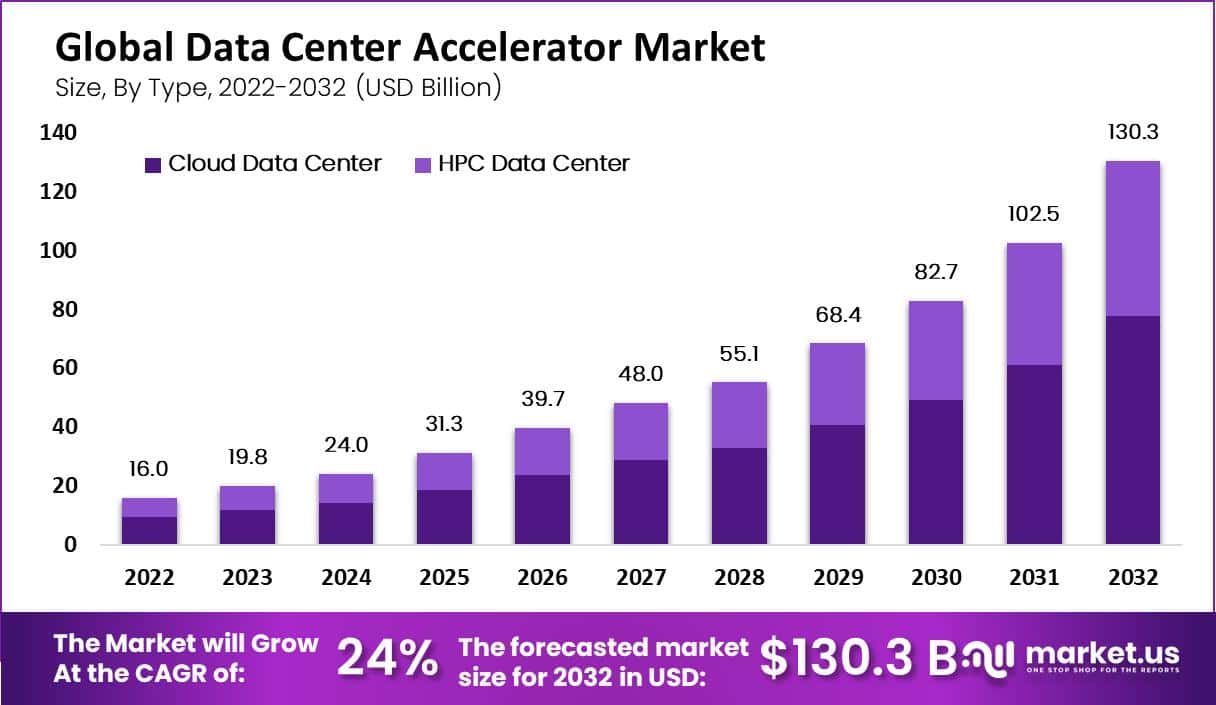

Where AI Accelerators Are Used Today

Everyday Devices

- Smartphones (camera AI, voice assistants)

- Laptops (Windows Copilot+, macOS ML features)

Data Centers

- Chatbots and large language models

- Recommendation engines (Netflix, YouTube)

Automotive

- Self-driving systems

- Driver assistance features

Healthcare

- Medical imaging

- Disease prediction

Benefits of AI Accelerators

- ⚡ Faster AI processing

- 🔋 Better energy efficiency

- 🔒 Improved data privacy (on-device AI)

- 📈 Scalable performance

Without AI accelerators, modern AI would be slow, expensive, and impractical.

AI Accelerators vs CPUs: Simple Comparison

| Feature | CPU | AI Accelerator |

|---|---|---|

| Task Type | General-purpose | AI-specific |

| Parallel Processing | Limited | Massive |

| Energy Efficiency | Lower | Higher |

| AI Performance | Moderate | Extremely high |

Common Myths About AI Accelerators

❌ “AI accelerators replace CPUs”

✅ They work alongside CPUs, not replace them.

❌ “Only big companies need AI accelerators”

✅ Phones and laptops now include NPUs for everyday users.

❌ “AI accelerators are only for training”

✅ Inference is equally important and widely used.

FAQs: AI Accelerators Explained Simply

1. What is an AI accelerator in simple words?

A chip designed to make AI tasks run faster and more efficiently.

2. Is a GPU an AI accelerator?

Yes, GPUs are the most common AI accelerators today.

3. Do laptops have AI accelerators?

Yes, many 2025 laptops include NPUs for on-device AI.

4. Are AI accelerators expensive?

High-end data center chips are costly, but consumer devices include affordable versions.

5. Do AI accelerators improve battery life?

Yes, NPUs handle AI tasks using less power than CPUs or GPUs.

6. Will AI accelerators become standard hardware?

Absolutely. They are becoming as common as GPUs.

Conclusion: Why AI Accelerators Are the Future

Now that you’ve seen AI accelerators explained simply, one thing is clear—they are the silent engines behind modern AI. From smartphones to supercomputers, AI accelerators make artificial intelligence faster, smarter, and more accessible.

As AI continues to grow, these specialized chips will become a standard part of every device, shaping the future of computing in ways we’re only beginning to imagine.